The ASP.NET development field has seen rapid growth in recent years. It has attracted businesses to hire ASP.NET developers for their future application development plans. Well, what makes this language different is the use of search engines considered under ASP.NET development. Let's talk about optimizing various ASP.NET websites for search engines.

Through ASP.NET, you can help make websites more user-friendly and thus help rank through various end results. Asp.net development practice has helped many people to gain versatility in this language, and it can easily stretch new fields to ensure proper search engine optimization.

Uniform Resource Locators

A URL for any given page specifies its address, which must be located in a mechanism retrieved by any means of the protocol. Of course, it is likely that the protocol will be HTTP or HTTPS in many cases. It is then followed by the domain and also a unique identifier with some description specified.

In many cases, the URL should also include the content and keywords that are in the page title, description, and content. It also does not give any indication about the resources and hence is not descriptive. It certainly doesn't help other search engines on the internet understand the page as well. But comparatively, these days, URLs are better from SEO point of view.

SEO and other User Friendly URLS

URL schemes from older versions rely on URLs matching physical device names and paths. There can be many dynamic values which we should add to the identifier. And by using some parameters, we should display the article in a way that helps to avoid string parameters.

Therefore, search engine advice is to avoid query strings and keep them short and small in number. When there is dynamic data, a new routing system is introduced that allows the developer to configure different types of URLs at the same time.

Friendly URLs and Their Routing Strategies

Whenever we talk about friendly URLs, there are always web forms, which are based on mapping the required URLs to all physical files. It also includes a new file system based on the same paradigm, which helps with the default setup of physical files. But, in those cases, the extension is not specified. Therefore, it bypasses the need for arbitrary values and transfers the data to the same URL, where the different segments are combined.

In this context, extracting hidden values from URLs matching different segments requires absolutely no overhead. NuGet packages play an important role in developing and projecting new templates whenever we talk about friendly packages.

Important ASP.NET MVC Routing Interview Questions

ASP.Net MVC Routing is one of the preferred topics, which ASP.Net Development company ask during interviews related to MVC. Here, we found some important questions about MVC routing. If you are a beginner in MVC and want to learn about it, read and understand these questions.

What is routing in MVC?

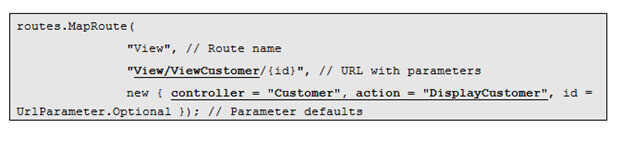

Routing helps define user-friendly URL structures and maps them to controllers. For example - we want that when any user types http://localhost/View/ViewCustomer/, it navigates to the "Customer" controller and calls the "DisplayCustomer" action. You can define it by adding an entry to the "routes" collection using the "maproute" function. Here is the code, which explains how to define URL structure and mapping with controller and action.

Where is the route mapping code written?

The route mapping code is written in “RouteConfig.cs” file. The code "global.asax" is registered with the application start event.

Is it possible to map multiple URLs to a same action?

Yes, there is a possibility. You can do that, by creating two entries with unique key names and specifying the same controller and action.

What is the benefit of defining route structures in code?

Often it is the developer, who codes the action methods. The developer is allowed to see the URL structure from the front instead of going to “routeconfig.cs” and seeing long written codes.

Can developers use both attribute routing and convention-based routing in an MVC project?

Yes, developers can use both routing mechanisms in a single MVC project. Controller action methods that contain the [route] attribute implement attribute routing, and others without the [route] attribute implement convention-based routing.

When to apply Attribute routing?

Convention-based routing is difficult to support specific URL patterns. However, you can easily achieve that URL pattern with attribute routing.

Recent Blogs

Categories