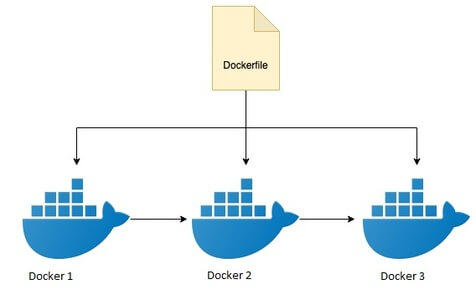

Technology: Docker is one of the most used containerized platforms to create images. Docker has introduced multi-stage builds from the 17.05 version. To use multi-stage builds required minimum version of docker is 17.05 or higher.

Multi-stage builds are used to optimize the Dockerfile, easy to read and maintain the Dockerfile.

Before Multi-Stage Builds

For developing a production image, it has undergone different steps like unit-testing, static code analysis, integration testing, Accessibility tests, etc...

For these to execute in Dockerfile, it will take so much of time, need to install all different tools/software(s) required at each stage, and also it is difficult to optimize the Dockerfile, and finally, for running the application in Docker environment, these tools are not needed and also the size of the final docker image will be increased if we add more validation checks.

Multi-Stage builds dockerfile, builds solve these type of the problem, similar to how CI/CD pipelines used to split the build stages into different steps, similarly, we can split the dockerfile steps into different stages.

Multi-stage builds

Multi-stage builds are a method of organizing the dockerfile to minimize the size of the final docker image, improve the runtime performance, improves the readability of the dockerfile.

Multi-stage builds are creating by dividing each task into a different stage, each stage can reference to the different base image, and copying the files from one container to another container.

For example, we are going to create a docker image for NodeJS application, and it has different stages like, building, eslint, code analysis, unit testing, accessibility tests and finally deploying the application.

Building

As the first stage, we will copy the source code to docker image, and install the application.

ES-Linting

Next, we need to run the compiled JS file and execute a set of ES6 rules on source code. For executing the linting, again we need to copy the source code to docker environment, it will be duplicated as we copied the code into docker environment, and we can re-use the files copied from the step 1.

For this execution, suppose assume that we wrote an npm task lint, we need to execute this task in this stage.

Here, we used --from=0, means we are referring the first temporary container, and copying the files to second container.

Suppose if we want to change the order the stages, it becomes complex we need to change the dockerfile in all places. If we use some name to each stage build we can directly refer to this stage by using a name, this way we can remove the dependency on stage order.

We can update the above stage docker statements below for specifying the name for the build stage and copying the files by using a build stage name.

Here, we specified builder as name stage, this is referencing in the lint stage.

We will use the output files final stage, for this reason, we need to use a stage name, for specifying the stage.

Static Analysis

Next, we will run the code quality tools, we will use some static code analysis tools, to analyze the application code. For example, we will use sonarQube is one most used open-source tool for static code analysis, it will vary from organization to organization.

Here, also we are copying from source code from build stage, and executing the sonar npm task, later we will use these sonar outputs from this stage to the final stage.

Similarly, we can write as many stages as we want, and finally, we will use the base image with minimum size, and we will copy all generated files from each stage to the final image, and start the application.

During the Java web developing the dockerfile, we need to verify each image stage, and to see the file system, for verifying the docker instructions.

Using the target argument we can pass the

For example, if we use target as sonarqube, then all steps upto sonarqube will be executed, we can verify all stages to this stage.

Conclusion

in the present blog, we learned about the multi-stage builds in Dockerfile, complexities before multi-stage, added advantages for using this.